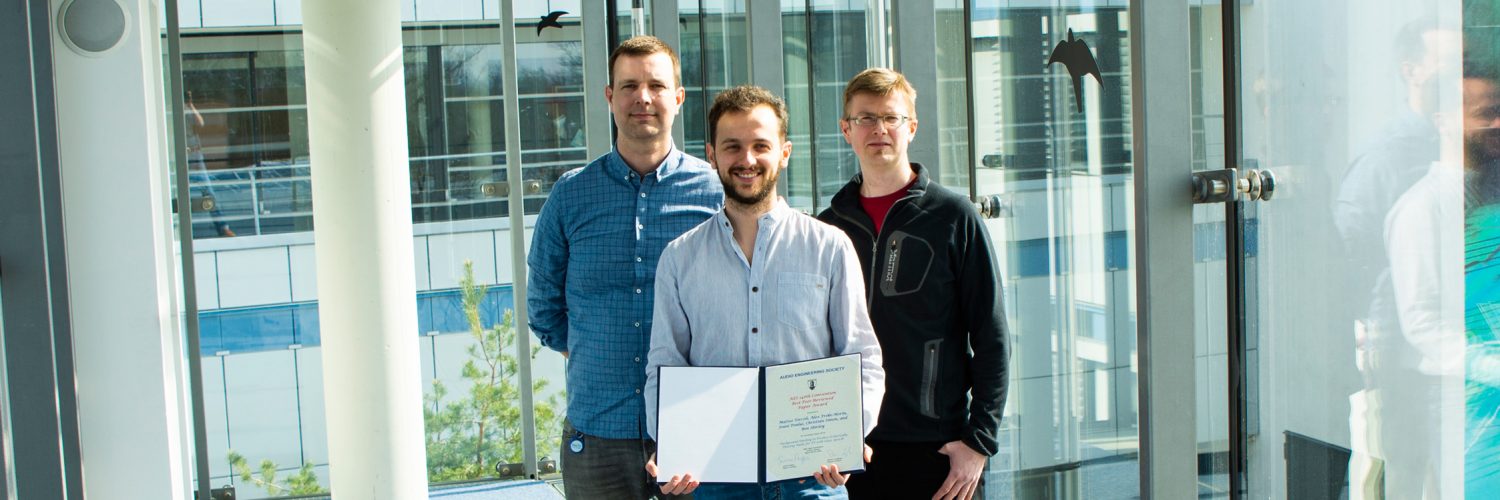

Fraunhofer IIS was honored not once, but twice at the recent 146th AES Convention in Dublin, Ireland: while Christian Uhle received the AES Board of Governors Award in recognition of co-chairing the 2017 International AES Conference on Semantic Audio, the “Best Paper” award went to “Background Ducking to Produce Esthetically Pleasing Audio for TV with Clear Speech” written by Fraunhofer IIS scientists Matteo Torcoli, Jouni Paulus and Christian Simon in collaboration with Alex Freke-Morin and Professor Ben Shirley from the University of Salford, UK.

The paper addresses the well-known issue of low intelligibility of foreground speech – such as dialogue and commentary – in television programs, which usually occurs when the background is too loud compared to the speech. The dilemma is that music and effects are essential for full understanding and enjoyment of a show, but they can energetically mask speech, making it impossible or tiring to understand.

Next Generation Audio systems like MPEG-H Audio can solve this problem, as the object-based nature of the MPEG-H system enables the viewers to adjust the loudness level to their needs via the Dialogue Enhancement feature. However, broadcasters will still need to produce a default mix, and it should satisfy as many listeners as possible.

In order to improve the intelligibility of foreground speech in default mixes while keeping the background track enjoyable, producers can use the technique of background ducking. This is defined by the paper’s authors as “any time-varying background attenuation with the aim of making the foreground speech clear.” However, technical details and best practices for esthetically well-tuned ducking have so far not been documented in mixing handbooks and broadcaster recommendations – every so often, the only recommendation given in literature was that foreground speech must be “comprehensible” and “clear.” The authors wanted to change this situation, especially since, as the audience watching television is becoming older, accessibility is becoming more and more important.

This is what led to the first study investigating desirable loudness differences (LDs) during ducking. Previous works had considered only a static background level, but not ducking. This paper analyzes common ducking practices found in a sample of TV documentaries, as well as the results of a subjective test conducted by Alex Freke-Morin during his M.Sc. project at Fraunhofer IIS, investigating the preferences of 22 listeners with normal hearing (11 expert, 11 non-expert) as regards LDs between foreground speech and background during ducking. Also, related works were reviewed and compared to these findings.

One of the most striking results of the tests shows a significant discrepancy between the preferences of non-expert and expert listeners. On average, non-experts are in favor of LDs that are four loudness units (LUs) higher than the experts – the ones actually producing audio mixes – prefer. So, in order to attain an esthetically pleasing default mix with clear speech, the authors recommend an LD of at least 10 LUs for commentary over music and 15 LUs for commentary over ambience, with location dialogue requiring higher LDs.

“There are so many examples in television that are below these numbers and have a very loud background. While they might be very nicely made and entertaining, it is no surprise that intelligibility drops. Having a loud background for artistic reasons is absolutely OK, but sound engineers need to be aware that they might lose part of the audience – unless the viewers have MPEG-H at home!” concludes Matteo Torcoli, who accepted the Convention Paper Award certificate on behalf of the whole group of authors.

The award-winning paper is now available as an open access document in the AES e-library.

Header image © Manuela Wamser – Fraunhofer IIS

This post is also available in: Deutsch